Better Judgments: What good judgments are missing

In 2026, are we still evaluating the world the right way?

In early February 2026, my coauthors, Lucille Geay, Caroline J. Charpentier, and I published a paper in which we argue that fighting misinformation will only be achieved by rethinking judgments in terms of plausibility estimation and confidence calibration. Specifically, with a world becoming increasingly uncertain and intractable, we want to strengthen people’s ability to evaluate the plausibility of incoming information in all its forms, and to calibrate their confidence in their judgment to the strength of available evidence – skills that can apply across domains and that don’t rely on external arbiters of truth. As human judgments are formed under bounded resources and multiple goals, we concluded with the necessity to study and implement metacognitive training, that acts on evaluative processes, rather than focusing exclusively on how to better detect binary truth.

What we did not address in that paper is the impact of frontier AI on human judgments. With the advent of superforecaster AIs, the gap between AI and ordinary humans in forecasting abilities is narrowing such that we can expect decisions to be better supported by AI than by humans in a proximate future.

If plausibility estimation and confidence calibration are central to judgment, what happens to them when calibrated probabilistic support becomes inexpensive, universal, and increasingly better than what most humans can generate? In 2027, will it still be worth improving judgment skills, and if yes, what exactly should we train?

To answer this question, we need to first understand why the current information environment forces us to build stronger probabilistic, metacognitive skills. Part 2 will is available here.

The world is large, and the information market is inappropriate

As routine problems become easier to solve, the problems that resist easy solutions are getting harder. Information is more specialized, more diverse, more complex; entities become more involved with each other; cognitive resources are unequipped for processing high volumes of content. To that extent, the world is becoming Larger: more uncertain and more intractable.

One of the consequences is the inability to form appropriate judgments in the face of a new or unusual claim, i.e., misinformation. Unfortunately, competition in open information markets seems unable to resolve today’s information-related risks.

In theory, a free information market (where information gets organically promoted) should lead to the emergence of truth: open debate, diversity of perspectives, and freedom of expression are assumed to favour convergence toward accurate beliefs. To become such a marketplace of ideas, first, the information market should be composed of individuals naturally inclined to maximize truth; and second, the addition of more information should lead to better judgments1.

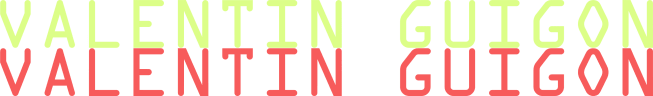

As we argued in the paper, the first premise doesn’t occur naturally. People rarely engage with information solely to assess its truth value. They navigate overlapping problems under competing motivational and cognitive constraints; they seek, share, and avoid information based on a web of incentives shaped by its anticipated practical, social, and emotional value, in addition to its truth value (Sharot & Sunstein, 2020, Nature Human Behaviour; Cosme et al., 2025, PNAS Nexus). On top of that, the information market is beat and stretched by the attention economy. Overall, information circulation is shaped by a system where platforms set the rules of visibility; social dynamics (as of homophily and networking) condition reception; and individuals arbitrate behavior based on value-based decision-making.

For these reasons, socially desirable but factually uncertain content may spread further than definite truths with social costs; emotionally costly content may be less investigated; instrumental content with long-term downsides may be prioritized over alternatives. As a result, on the individual level, people have limited bandwidth to produce an informed judgment. On the collective level, higher-quality information hardly gets the upper hand.

Because modern information environments do not reliably select for truth, it falls on individuals to form adapted judgments. Institutions came up with strong policy responses (e.g., strengthening digital literacy, fostering critical thinking, raising awareness of cognitive biases). Although these solutions may end up affecting evaluative processes, their primary focus is to supply individuals with additional information, and with strategies for sorting, filtering, and parsing information. Sadly, it is far from guaranteed that “when encountering new information, individuals can attend to its relevant features, extract evidence in accordance with externally validated criteria of accuracy, and update their beliefs in proportion to the evidence“ (Guigon, Geay and Charpentier, 2026, Communications Psychology).

To see why such a strategy is partial and incomplete2, we need a model of judgment that is defined for large worlds rather than imported from small-world norms.

Human judgments in large worlds

Most real-world decisions occur under uncertainty and complexity3. For a given problem at hand, people rarely know the full space of outcomes, cannot assign precise probabilities, and cannot compute optimal solutions even if they exist. The complexity of problems also favors long-term drifts, where a misguided decision will inform the next one down the line, and so on. For the same reason, overconfidence in a dubious source conditions which claims get investigated next, which shapes the evidence base for downstream judgments, which in turn affects what actions get taken. As the complexity of the environment increases, the cost of such chain of errors rises as well.

Forming good judgments in large worlds mandates one to estimate events and outcomes probabilities while managing constraints: limited time, limited information, limited computation. These are worlds of Poker rather than Tic-Tac-Toe, where the crux of judgment is recognizing when you don’t know enough to be confident and proportioning your certainty to the evidence you actually have.

The formal norms that have long defined “rational judgment” (complete and stable preferences, logical consistency, coherence with probability theory, maximization of subjective expected utility) were built for small words. They presuppose situations in which all relevant states, outcomes, and probabilities are known, the problem is well defined, and optimal solutions can in principle be computed4.

In large worlds, one needs to let go of the idea of maximizing a single objective (truth, expected utility, pleasure, the right action). Maximization presupposes a single well-defined objective and an unconstrained solution space. Because large worlds offer neither, we must replace maximization with compromise: trading off competing goals under time, information, and computational limits. In those worlds, one doesn’t need the optimal solution; one needs a good-enough solution (satisficing).

Heuristics at the efficiency frontier

The infamous heuristics are cognitive strategies for efficiently processing information under uncertainty: fast and frugal processing that leverages environmental structure and selectively uses, weights, or interprets information to make effective judgments. These “good enough“ judgments are very often reliable, and sometimes proven better than analytical reasoning. They may consist of following strict rules of reasoning, or breaking a problem into smaller steps, rather than applying a complex mapping of the problem and computing the entire set of possible state-outcome pairs.

Biases are intrinsic properties of heuristics. Because a system ignores information, it can operate faster and efficiently, but it creates byproducts: systematic deviations such as overweighting whatever comes to mind easily, anchoring on an initial figure when estimating a quantity, or treating a familiar claim as more credible than an unfamiliar one regardless of evidence. These errors can be viewed as the externalities thanks to which a system can perform well enough.

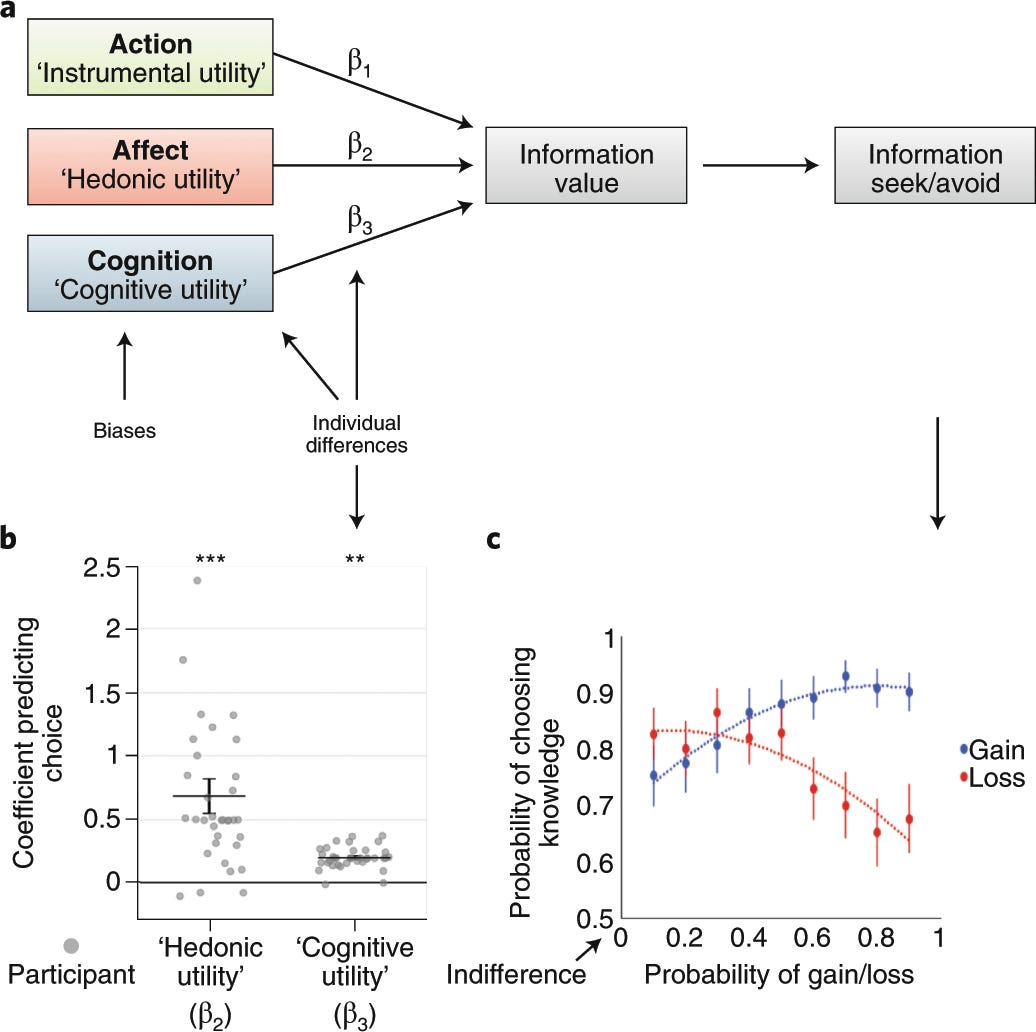

It seems obvious at first that accumulating more information should improve judgment (principle of total evidence). However, gathering information takes time and effort; processing it requires computation; and using it presupposes stable relations between cues and outcomes. Moreover, informational gains are not linear. After a certain point, additional information tends to yield diminishing returns and may even degrade performance. This introduces a cost–accuracy trade-off: for a given limitation on time, effort, or computational resources, there is an efficient frontier beyond which further complexity no longer improves accuracy.

To understand why, consider what happens in predictive modeling. A model that takes all available information into account and fits observed data closely does not necessarily perform well when predicting new cases. By absorbing unsystematic variation (noise) complex models overfit. Their predictions become unstable outside the training sample. Models are predictive because they capture systematic regularities, and avoid absorbing all available information. Ignoring information constrains the space of possible predictions, but introduces bias.

Heuristics operate at a sort of efficient frontier. They seem especially appropriate in large worlds. In these settings, where information is scarce (due to inherent scarcity or lack of time/resources), degraded, noisy, or unstable, there is no guarantee that additional information is informative, relevant, or stable. More computation can increase sensitivity to noise rather than improve accuracy. Here, following simple rules of decision can outperform analytical reasoning. On the other hand, this implies that individuals ought to regulate how much confidence their heuristics should authorize, given the evidence actually available.

As the world becomes Larger, we may not want to help people reason like ideal observers in small worlds. Rather, we want to help them perform appropriate judgments in large worlds: we want them to learn estimating uncertainty, proportioning confidence to evidence, and recognizing the boundaries of their actual knowledge.

Improving human judgment

My co-authors and I argued that, if misinformation persists not because people fail to reason but because they reason adaptively under constraints, then the target for intervention should be the mechanisms through which their judgments form.

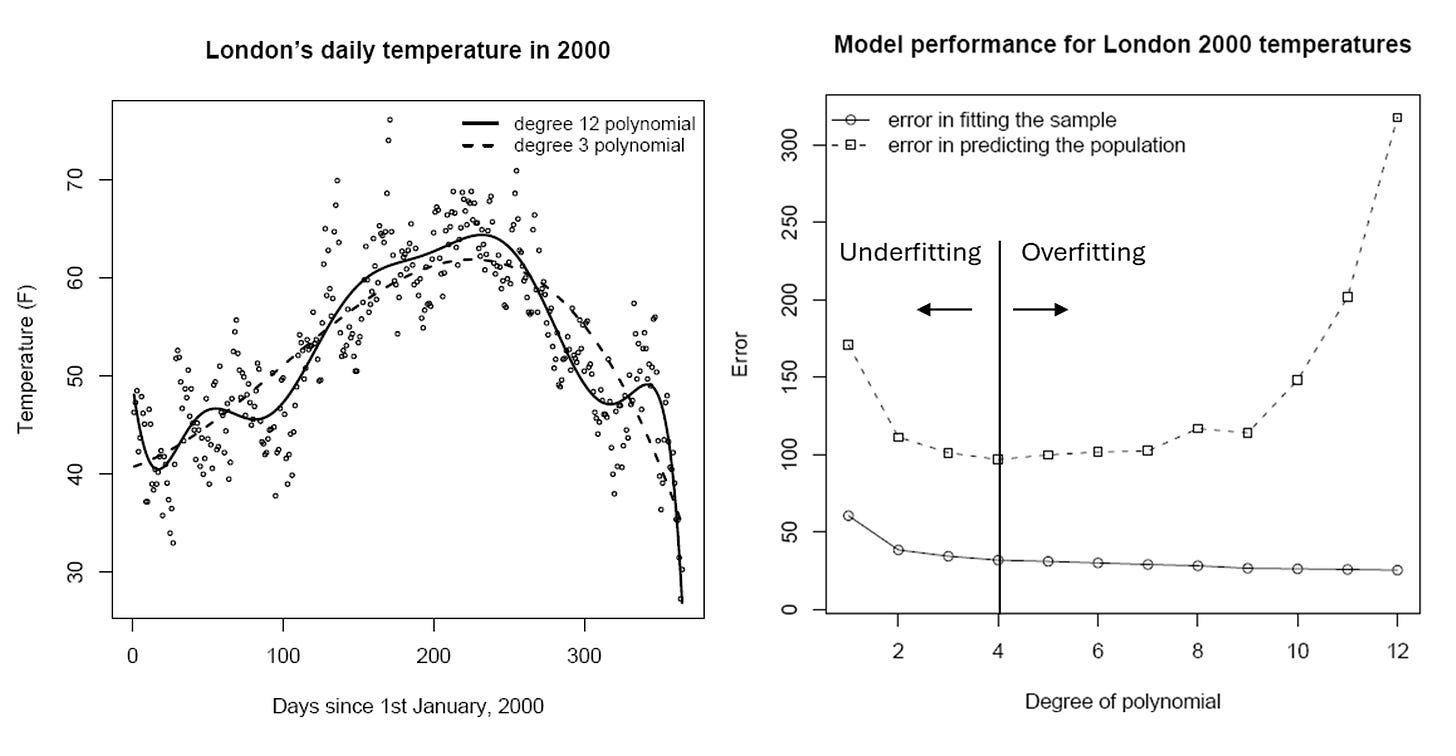

We can typically consider judgment as a categorical, action-relevant decision: X is A rather than B. In formal cognitive accounts, such decisions result from a sequence of operations: selecting a target proposition, estimating the reliability of incoming information, accumulating evidence, and applying a decision rule. This first-order component includes a graded belief about the proposition: an estimated probability that X is A rather than B. But judgment also includes a second-order component: confidence, understood as an estimate of the probability that the judgment is correct. This second-order estimate represents uncertainty about one’s own decision and is therefore metacognitive. Each can go wrong independently. You can have a reasonable sense of a claim’s plausibility and still be wildly overconfident in it.

Identifying reliable information is mostly a matter of prediction. It requires the ability to assess, under uncertainty, the plausibility of a claim, the trustworthiness of a source, or the need for further inquiry. These judgments depend on specific competencies tied to evidence accumulation and information-seeking behaviors, in particular metacognitive skills such as uncertainty calibration, evaluation of one’s own knowledge, and recognition of one’s and others’ limits (Fleming, 2024, Annual Review of Psychology).

Because plausibility estimation and confidence calibration are skills of evaluation mobilized in any judgment, those skills are beneficial to any kind of judgment, and especially valuable in any Large world.

I mark a distinction between markets in which SEU assumptions can be generalized, and the social information market (information across: (i) social online networks, such as Google, Meta, X; (ii) social offline networks, such as family, friends, workplace; and (iii) non-network media, such as television, journals, radio) where SEU assumptions can be, and have been, contested.

Judgments typically associated with System 1, such as everyday decisions, seem to approach the performance of an ideal Bayesian observer; and heuristics are often near-optimal in trading decision quality against cognitive cost, given computational constraints (Griffiths & Tenenbaum, 2006, Psychological Science; Callaway et al., 2022, Nature human behaviour). On the other hand, deliberative reasoning can lead to motivated reasoning, with inaccurate judgments motivated by beliefs.

In small worlds, all states, outcomes, and probabilities are known; problems are well-defined; and for those reasons optimizing for an objective (via SEU, Bayesian updating, or backward induction) is appropriate.

Savage restricted subjective expected utility theory to ‘small worlds,’ and later work by Allais, Ellsberg, and Morgenstern showed systematic violations of its axioms, highlighting unavoidable error in complex settings (Gigerenzer, 2025, Behavioural Public Policy).